Differentiable volumetric rendering-based methods made significant progress in novel view synthesis. On one hand, innovative methods have replaced the Neural Radiance Fields (NeRF) network with locally parameterized structures, enabling high-quality renderings in a reasonable time. On the other hand, approaches have used differentiable splatting instead of NeRF’s ray casting to optimize radiance fields rapidly using Gaussian kernels, allowing for fine adaptation to the scene. However, differentiable ray casting of irregularly spaced kernels has been scarcely explored, while splatting, despite enabling fast rendering times, is susceptible to clearly visible artifacts.

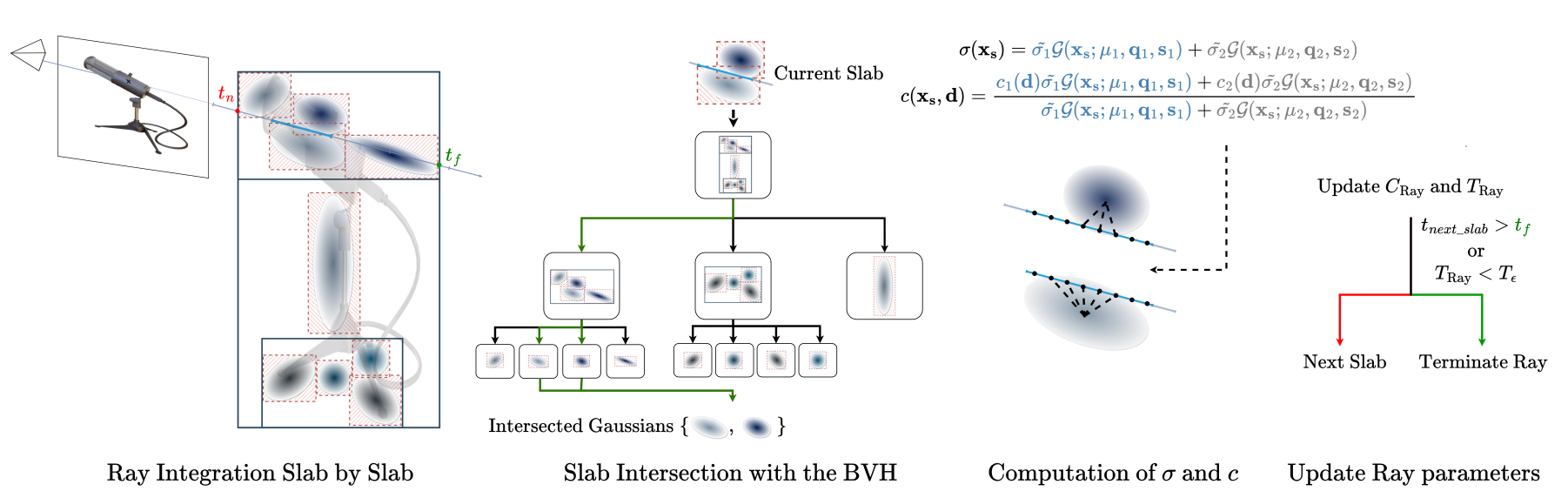

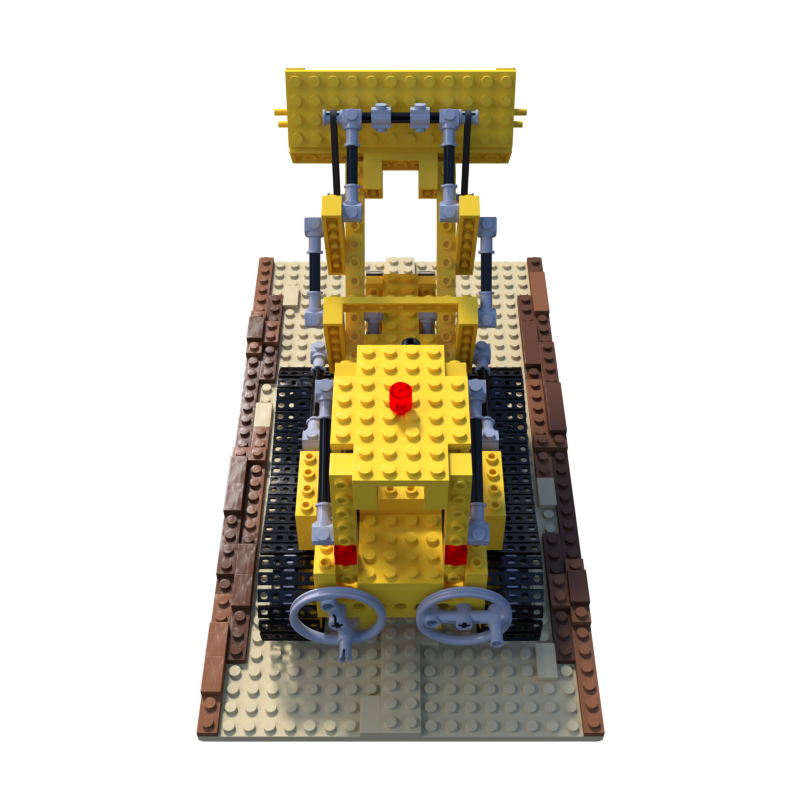

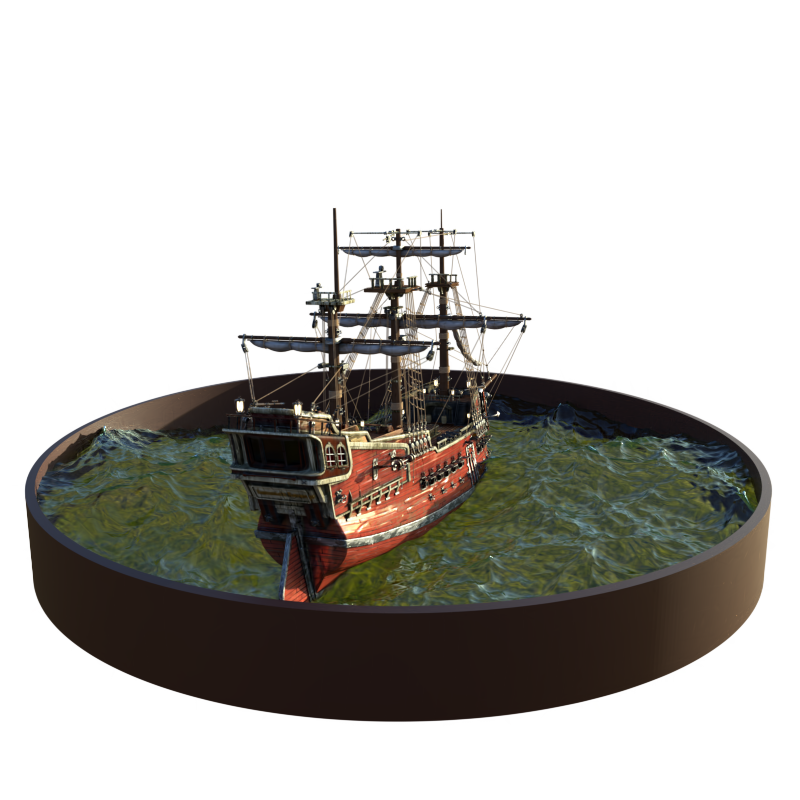

Our work closes this gap by providing a physically consistent formulation of the emitted radiance c and density σ, decomposed with Gaussian functions associated with Spherical Gaussians/Harmonics for all-frequency colorimetric representation. We also introduce a method enabling differentiable ray casting of irregularly distributed Gaussians using an algorithm that integrates radiance fields slab by slab and leverages a BVH structure. This allows our approach to finely adapt to the scene while avoiding splatting artifacts. As a result, we achieve superior rendering quality compared to the state-of-the-art while maintaining reasonable training times and achieving inference speeds of 25 FPS on the Blender dataset. Associated code will be released publicly on GitHub.

@INPROCEEDINGS{blanc2025raygauss,

author={Blanc, Hugo and Deschaud, Jean-Emmanuel and Paljic, Alexis},

booktitle={2025 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV)},

title={RayGauss: Volumetric Gaussian-Based Ray Casting for Photorealistic Novel View Synthesis},

year={2025},

volume={},

number={},

pages={1808-1817},

keywords={Training;Hands;Casting;Computer vision;Rendering (computer graphics);Neural radiance field;Inference algorithms;Slabs;Kernel;Videos;volume ray casting;differentiable rendering;radiance fields;novel view synthesis},

doi={10.1109/WACV61041.2025.00183}

}